Lambda Cold Starts - .NET 7 Native AOT vs .NET 6 Managed Runtime

Want to learn more about AWS Lambda and .NET? Check out my A Cloud Guru course on ASP.NET Web API and Lambda.

Download full source code.

Introduction

Cold starts are a common concern for .NET users of Lambda functions. The first invocation of a Lambda function requires the execution environment to be created, the binaries to be downloaded, and the initialization code to be run.

There are strategies to mitigate this, such as provisioned concurrency, and “pinging” the function to keep it warm.

But with the release of .NET 7, a new approach is possible, native AOT compilation.

I’m not going to go into the details of how it works, you can find out more from this post by my colleague James Eastham.

If you are not familiar with Lambda functions, they are a function as a service offering for your .NET code.

tl;dr

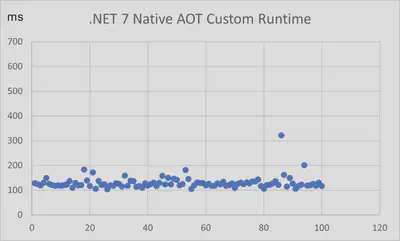

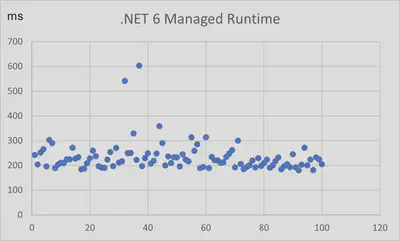

I created two Lambda functions, one with .NET 6 using the Lambda managed runtime, and the other with .NET 7 using a custom runtime and native AOT compilation. I then measured the cold start time of each function by forcing 100 cold starts for each function.

The average cold start time for the .NET 7 function was 129.83 ms, and the average cold start time for the .NET 6 function was 233 ms!

If cold starts are a concern for your .NET application, you should consider updating to .NET 7.

The graphs below show 100 runs of each function, with cold start time in milliseconds on the vertical axis.

|  |

As of the time of writing, native AOT on ARM 64 Lambda functions is not supported.

Get the tools

Install or update the latest tooling, this lets you deploy and run Lambda functions.

dotnet tool install -g Amazon.Lambda.Tools

dotnet tool update -g Amazon.Lambda.ToolsInstall or update the latest Lambda function templates.

dotnet new --install Amazon.Lambda.TemplatesInstall .NET 7.

Install and run Docker Desktop.

Comparing .NET 7 Native AOT and .NET Managed Runtime Cold Starts

This is not going to be a scientific comparison but based on multiple runs, I’ve seen largely similar results each time.

You are going to build and deploy a .NET 7 Lambda function using native AOT compilation, and a .NET 6 Lambda function using a managed runtime.

.NET 7 Native AOT Function

1. Create the function

Create a new Lambda function with the Lambda AOT template -

dotnet new lambda.NativeAOT --name LambdaNativeAOTChange to the LambdaNativeAOT/src/LambdaNativeAOT directory.

Open the Function.cs file and replace the code with the following -

1using Amazon.Lambda.Core;

2using Amazon.Lambda.RuntimeSupport;

3using Amazon.Lambda.Serialization.SystemTextJson;

4using System.Text.Json.Serialization;

5

6namespace LambdaNativeAOT;

7

8public class Function

9{

10 private static bool delayNextInvocation = true;

11

12 private static async Task Main()

13 {

14 Console.WriteLine("In init");

15 Func<string, ILambdaContext, string> handler = FunctionHandler;

16 await LambdaBootstrapBuilder.Create(handler, new SourceGeneratorLambdaJsonSerializer<LambdaFunctionJsonSerializerContext>())

17 .Build()

18 .RunAsync();

19 }

20

21 public static string FunctionHandler(string input, ILambdaContext context)

22 {

23 if (delayNextInvocation)

24 Task.Delay(10000).GetAwaiter().GetResult(); // slows down the first invocation

25 delayNextInvocation = false;

26 return input.ToUpper();

27 }

28}

29

30[JsonSerializable(typeof(string))]

31public partial class LambdaFunctionJsonSerializerContext : JsonSerializerContext { }The code is pretty simple, on the first invocation, the function handler will pause for 10 seconds. This gives you chance to force the creation of multiple Lambda execution environments by invoking the function repeatedly, thus seeing multiple cold starts.

2. Build and publish the function

Make sure Docker is running.

From the command line run -

dotnet lambda deploy-function LambdaNativeAOTThis compilation will take a while, especially the first time you run it as it needs to download the required Docker image.

You will be asked to select an IAM role, or create a new one, at the bottom of the list will be *** Create new IAM Role ***, type in the associated number.

You will be asked for a role name, enter LambdaNativeAOTRole.

After this you will be prompted to select the IAM Policy to attach to the role, choose AWSLambdaBasicExecutionRole, it is number 6 on my list.

After a few seconds, the function will be deployed.

Don’t invoke it yet!

.NET 6 Managed Runtime Function

1. Create the function

From the command line create a .NET 6 Lambda function with the following command -

dotnet new lambda.EmptyTopLevelFunction LambdaNet6ColdStartComparisonChange to LambdaNet6ColdStartComparison/src/LambdaNet6ColdStartComparison directory.

Open the Function.cs file and replace the code with the following -

1using System.Text.Json.Serialization;

2using Amazon.Lambda.Core;

3using Amazon.Lambda.RuntimeSupport;

4using Amazon.Lambda.Serialization.SystemTextJson;

5

6bool delayNextInvocation = true;

7Console.WriteLine("In init");

8

9var handler = (string input, ILambdaContext context) =>

10{

11 if (delayNextInvocation)

12 Task.Delay(10000).GetAwaiter().GetResult();

13 delayNextInvocation = false;

14 return input.ToUpper();

15};

16

17await LambdaBootstrapBuilder.Create(handler, new SourceGeneratorLambdaJsonSerializer<LambdaFunctionJsonSerializerContext>())

18 .Build()

19 .RunAsync();

20

21[JsonSerializable(typeof(string))]

22public partial class LambdaFunctionJsonSerializerContext : JsonSerializerContext { }Like the above function, the first invocation will pause for 10 seconds, allowing you to force multiple cold starts.

2. Build and publish the function

From the command line run -

dotnet lambda deploy-function LambdaNet6ColdStartComparisonFollow similar steps as above to create a new IAM role named LambdaNet6ColdStartComparisonRole, and attach the AWSLambdaBasicExecutionRole policy.

After a few seconds, the function will be deployed.

Invoke the functions

Time for the fun.

You are going to invoke the functions repeatedly and quickly, forcing multiple cold starts. You are going to write the results of the invocations to files.

From the command line run -

dotnet new console -n InvokeLambdaFunctionInParallelAdd the NuGet package AWSSDK.Lambda.

Then open the Program.cs file and replace the code with the following -

using System.Text;

using System.Text.Json;

using Amazon.Lambda;

using Amazon.Lambda.Model;

AmazonLambdaClient client = new AmazonLambdaClient();

if (args.Length != 1)

{

Console.WriteLine("Usage: dotnet run FunctionName");

return;

}

string functionName = args[0];

Console.WriteLine($"Invoking {functionName}...");

List<Task<InvokeResponse>> taskList = new List<Task<InvokeResponse>>();

for (int i = 0; i < 100; i++)

{

Console.WriteLine($"Invoking {i}");

var request = new InvokeRequest

{

FunctionName = functionName,

Payload = JsonSerializer.Serialize("hello world"),

LogType = LogType.Tail

};

taskList.Add(client.InvokeAsync(request));

}

await Task.WhenAll(taskList);

foreach (var task in taskList)

{

var response = await task;

var log = Encoding.UTF8.GetString(Convert.FromBase64String(response.LogResult));

Console.WriteLine(log);

}From the command line run -

dotnet run LambdaNativeAOT > LambdaNativeAOTOutput.txt

dotnet run LambdaNet6ColdStartComparison > LambdaNet6ColdStartComparisonOutput.txtOpen the two files and look for Init Duration: information, if the functions ran correctly, every invocation should have caused a cold start. If the Init Duration: is not present in every log, you may need to increase the 10 second delay when the function is invoked the first time.

In the attached zip file I included the output from my runs and a CSV file with a summary.

Conclusion

Even though I show only 100 cold start times for each type of function, I have run this test many times and the results are consistent.

The .NET 7 Native AOT function has a significantly faster cold start than the .NET 6 managed runtime function.

Download full source code.